CUSTOMER FIRST AI BOT PROTECTION

Putting the Customer First

We initially trialled VerifiedVisitors as a POC and Standard Bank was able to quickly see the security benefits of their unique architecture and Machine Learning.

Customers & Partners

VerifiedVisitors is Trusted by..

Behavioural Analysis

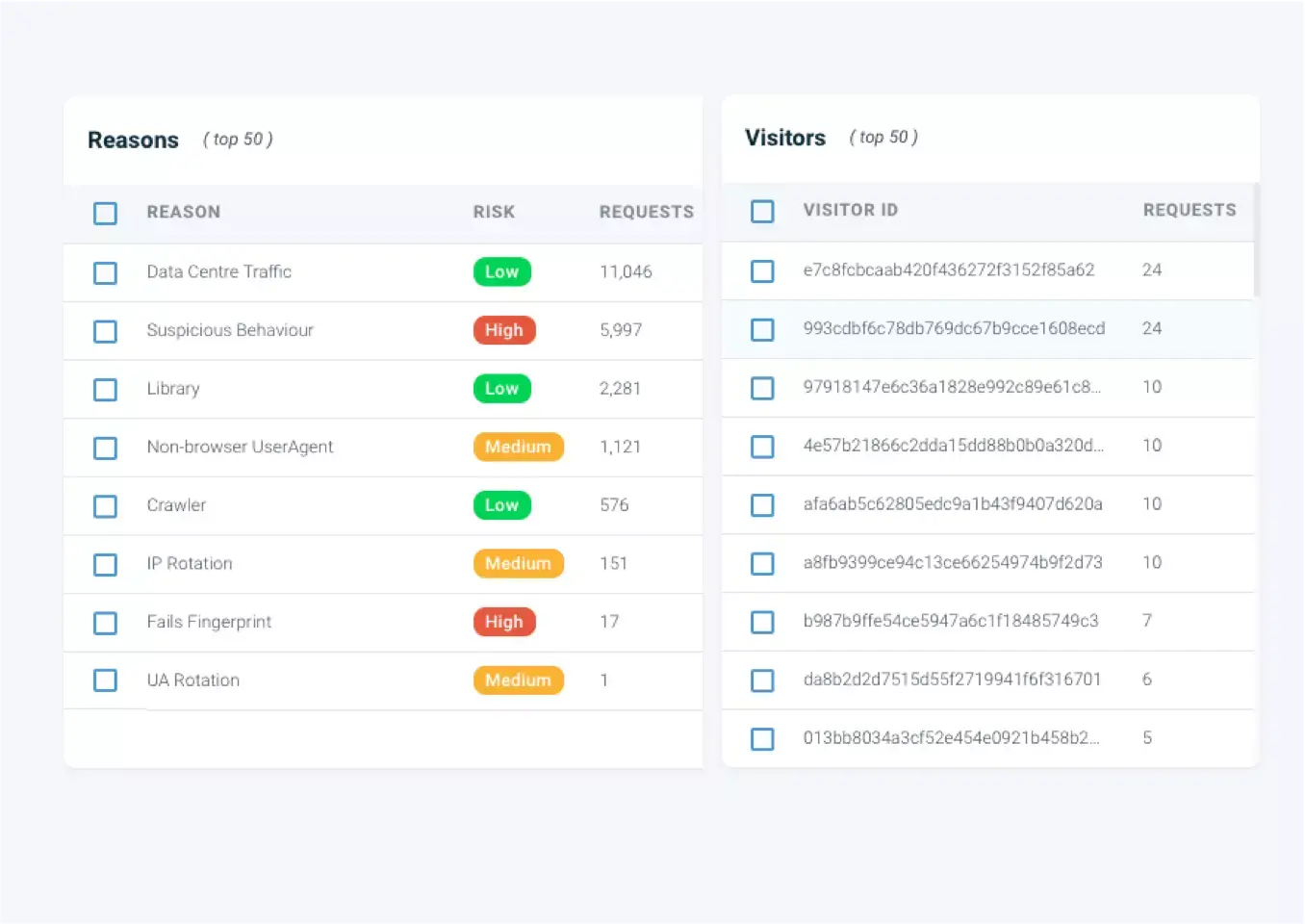

Behavioural analysis picks up unwanted or illegal behavour hitting API endpoints with no fingerprints.

MoreSee our AI Platform in Action

Command & Control

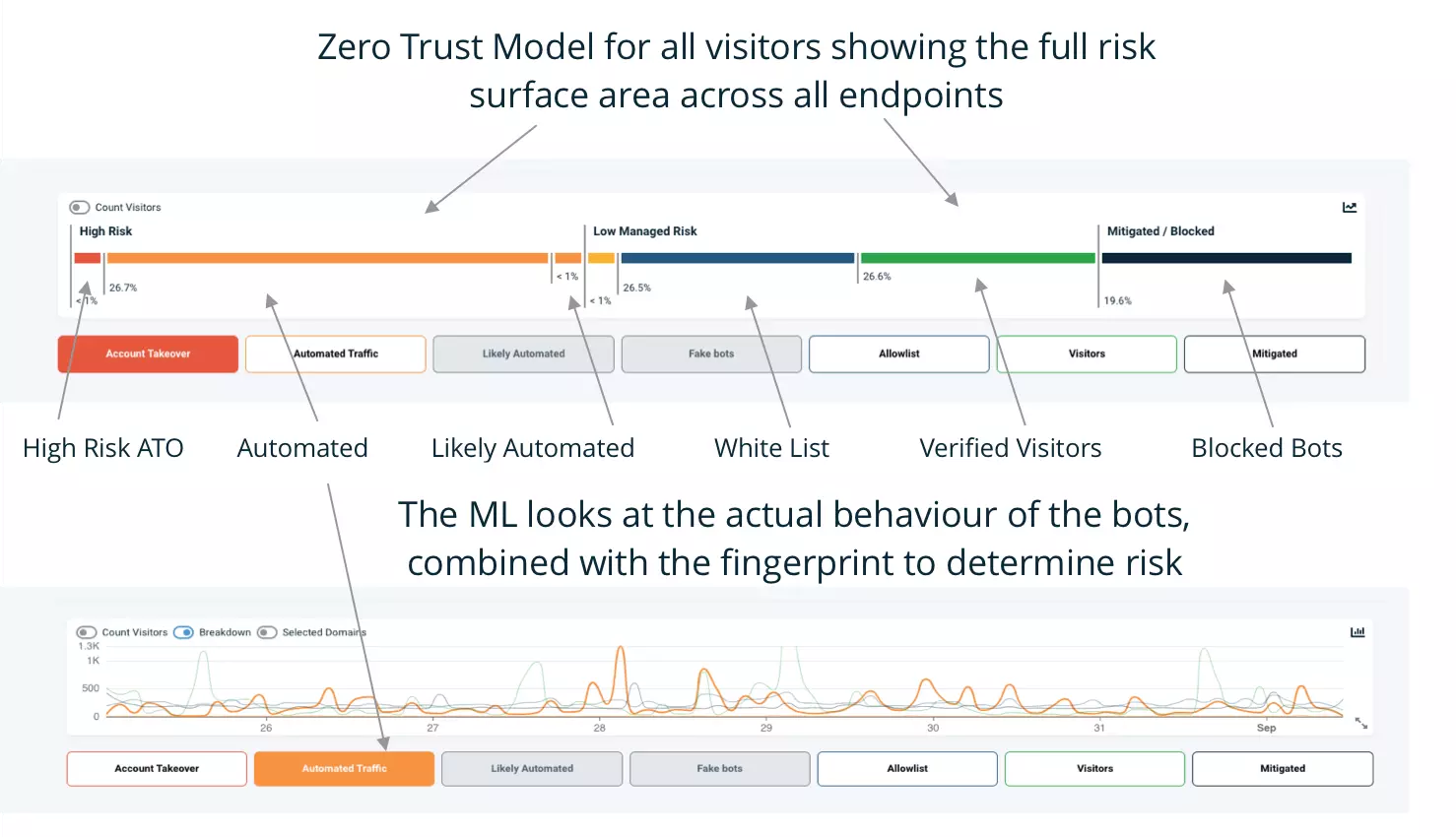

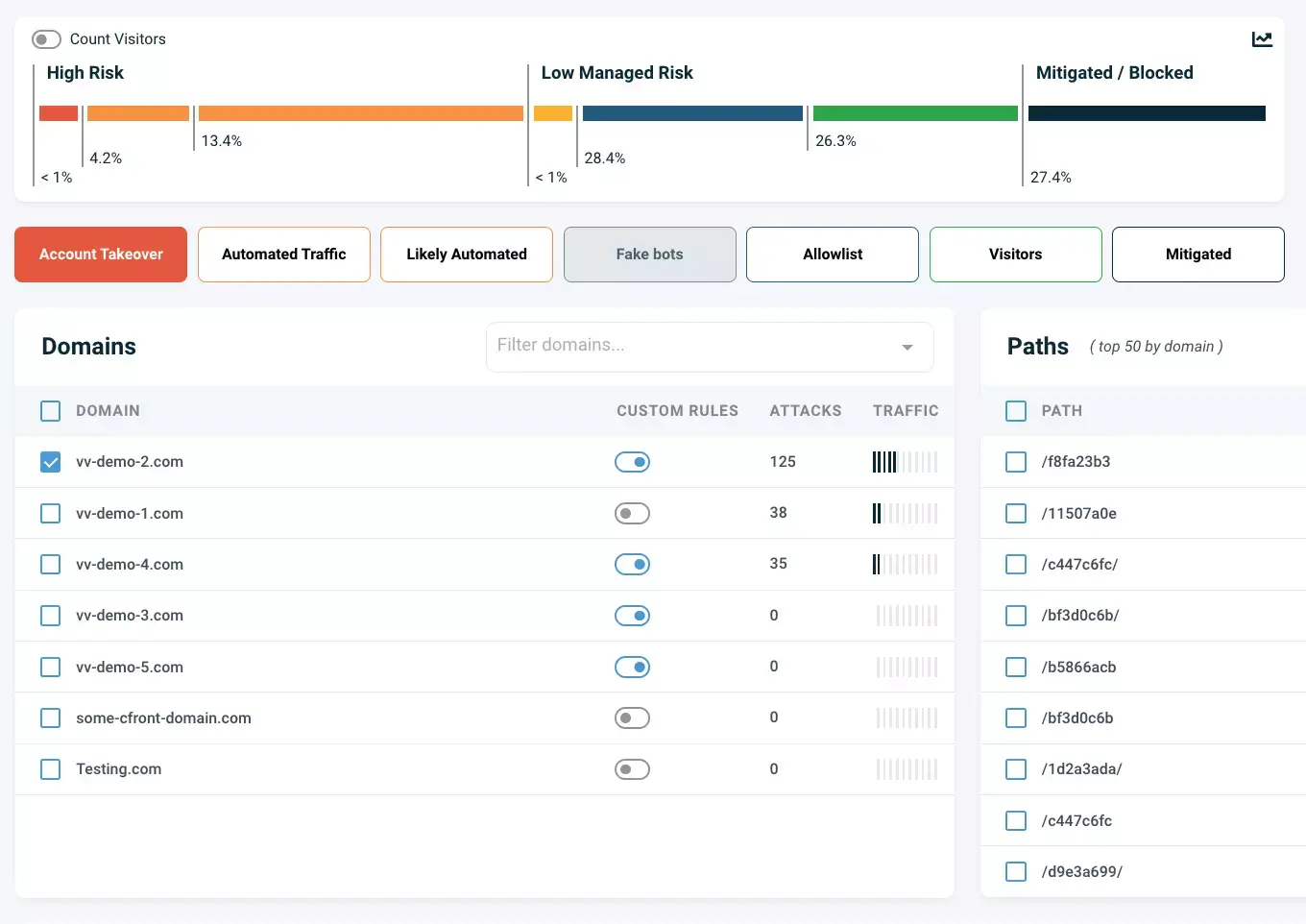

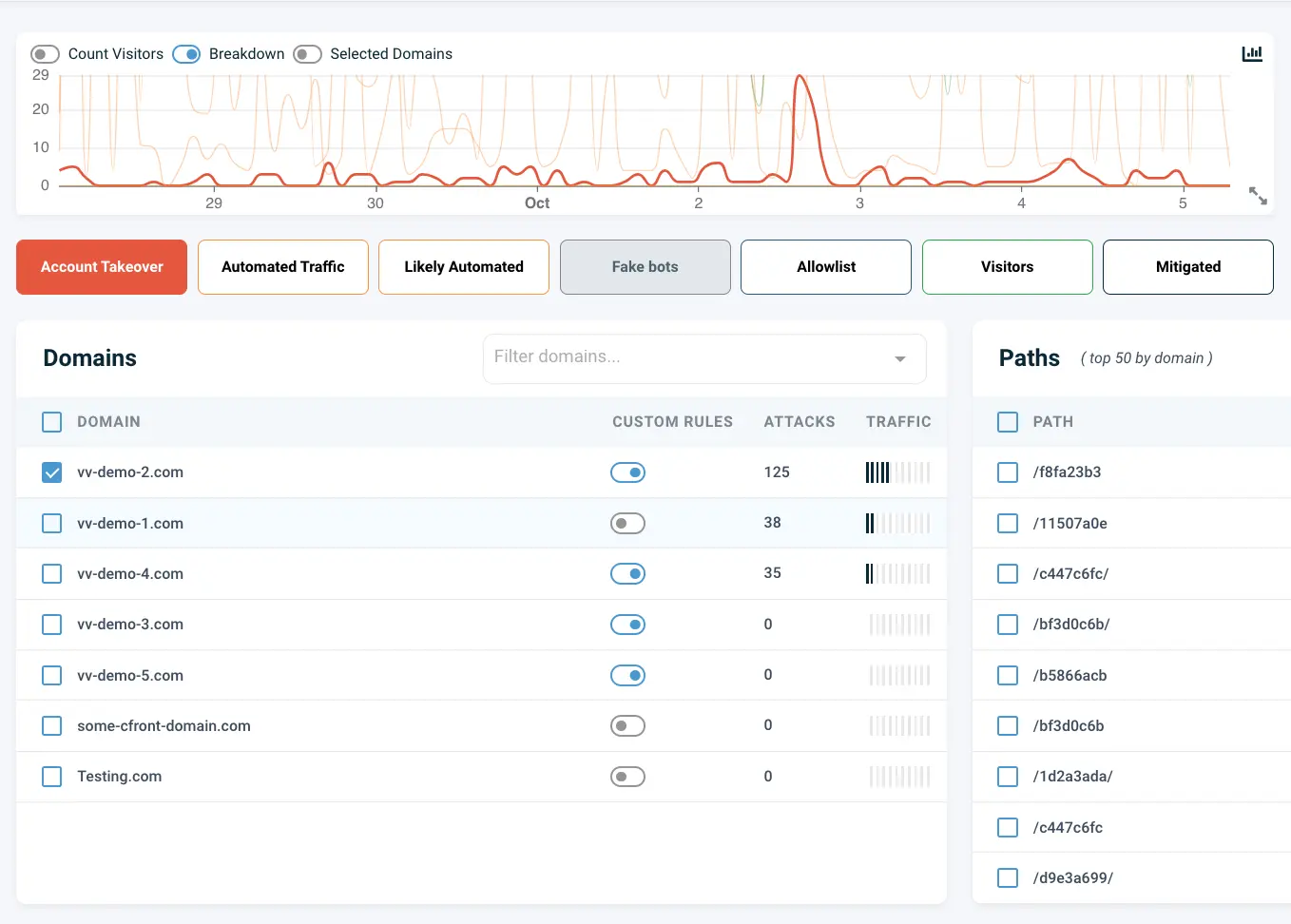

From one Command & Control centre you can manage and mitigate your high risk automated traffic attacks across hundreds of web and API endpoints. Set the policies you want once - and our AI Copilot applies and updates the policy dynamically, as new threats appear over time.

.svg)

AI Copilot

Our AI copilot constantly works in the background to assess new threats and ensure you're always protected. It's like having your own dedicated security resource working for you , constantly looking at risks, and dynamically creating new rules for each threat type, according to your traffic.

Domains

Our cloud architecture allows you to manage hundreds of sites and APIs rom one console, ideal for large brands, or agencies that manage portfolio’s of sites. You set your policy for all sites, and our AI Copilot does the rest.

.svg)

.svg)

Paths

Protect your critical paths and APIs from automated bot activity such as account takeover, shopping cart abuse, or IP theft. Keep your critical paths hidden from the bots by enforcing without robots.txt

Bot Threats

What does Ticket Scalping mean?

Understanding Ticket Scalping: A comprehensive Guide

Isabelle Arnfeld

Bot Threats

Price Scraping Bots: How to Stop Them Spying on ECOM Sites

Revealing the Secret Undercover Lives of Price Scraping Bots

Isabelle Arnfeld

Bot ThreatsFrequently Asked Questions

How are bots detected?

Detecting bots by simply monitoring your traffic, and employing CAPTCHA is like finding the proverbial needle in the haystack, and will lead to frustrating user experience as they inevitably get challenged as if they were bots. Traditional old school bot detection uses fingerprints or CAPTCHA to detect the bots. Although this used to be effective, the use of APIs and widespread SaaS bot services which use botnets using domestic IPs has rendered these basic bot detection methods obsolete. .

What are bot attacks

Bot attacks encompass malicious activities performed by automated programs. These include scraping, brute force attacks, and denial of service attacks. Robust bot detection techniques and software are essential to prevent such attacks.

How effective is IP Reputation for detecting bots?

While IP addresses with known associations with bad actors or botnets can raise suspicion, they are just not sufficient alone for detecting bots and often lead to false positives. Many commercial bot services now use residential proxies and real machines, and bot farms using actual mobile devices that pass all the associated fingerprinting and mobile tests, and hide within the mobile proxies which cover many thousands of legitimate mobile devices, and can’t be simply blocked.

Are there specific industries more susceptible to online fraud and bots?

Yes, industries like finance, e-commerce, and social media are particularly vulnerable due to the high volume of online transactions and interactions.

.png)